Intrinsic AI Assurance: Metacognition and Formally Verified Reasoning in Foundation Model Agents

|

ABSTRACT

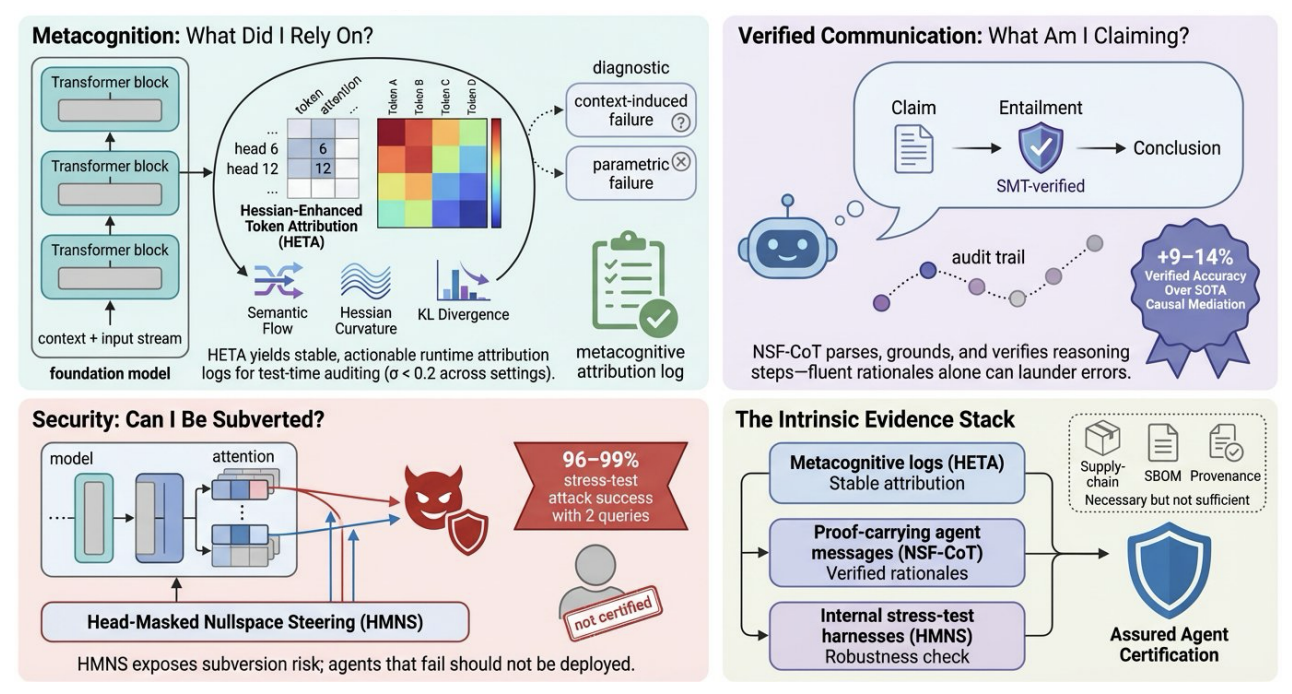

As foundation-model agents assume safety-critical roles in autonomous cyber analysis, embodied AI, and intelligent cyber-physical systems, existing assurance mechanisms (SLSA, SBOMs, benchmark scorecards) remain limited to extrinsic evidence of provenance and output quality. They offer little insight into the computations that produced a given decision. We argue that certifiable assurance cases for such agents require intrinsic evidence at three interfaces, and present evaluated methods for each. First, Hessian-Enhanced Token Attribution (HETA) produces stable, runtime metacognitive logs that identify which inputs and attention heads drove a prediction, enabling diagnosis of context-induced versus parametric failures. Second, NSF-CoT provides neuro-symbolic formal verification of chain-of-thought reasoning by combining SMTbased decision procedures with counterfactual attribution, yielding composable audit trails that improve verified accuracy delta by 9 to 14 percentage points over state-of-the-art causal mediation baselines. Third, Head-Masked Nullspace Steering (HMNS) exposes alignment fragility through geometry-aware circuit-level interventions, achieving up to 99% attack success with only 2 queries across four benchmarks and thereby defining a concrete stress-test criterion for agent certification. Together, these contributions form an intrinsic-evidence stack that complements supply-chain tracking. Based on our group’s work published at ICLR 2026 (HETA, HMNS) and CVPR 2026 (Contrastive Subnet Erasure). |

|

BIO Sumit K. Jha is a Professor of Computer Science at the University of Florida. He received his Ph.D. and M.S. in Computer Science from Carnegie Mellon University, where his thesis focused on Bayesian model checking. His research focuses on the interpretability, control, and security of AI systems, combining formal methods, information theory, and geometric analysis to build high-assurance foundation models. He has led projects totaling over $11 million funded by DARPA (GARD, ANSR, TIAMAT), NSF, DOE, ONR, NNSA/ORNL, and AFRL, and has held multiple summer faculty appointments at the Air Force Research Laboratory Information Directorate. His recent work on AI safety and jailbreaking has been published at ICLR 2026, CVPR 2026, and ICML 2025, and has received coverage from UF News, TechXplore, and US Cybersecurity Magazine. He is a recipient of the AFOSR Young Investigator Program Award and multiple best-paper awards. |